With some simple tuning, SSHFS performance is comparable to NFS almost across the board. In an effort to get even more performance from SSHFS, we examine SSHFS-MUX, which allows you to combine directories from multiple servers into a single mountpoint.

Combining Directories on a Single Mountpoint with SSHFS-MUX

In a previous article I talked about SSHFS, a userspace filesystem that allows you to mount a remote filesystem via SFTP over SSH using the FUSE library. It is a very cool concept for a shared filesystem with reasonable security courtesy of SSH. Although the encryption and decryption processes increase CPU usage on both the server and client, a few tuning techniques will bring performance fairly close to NFS.

FUSE filesystems are easy to write and maintain compared with kernel-based filesystems, leading to a proliferation of userspace filesystems. One, SSHFS-MUX, builds on SSHFS to allow you to combine directories from different hosts into a single mountpoint. “MUX” is short for multiplexer, which generically allows the device to select one of several input signals and forward it as a single output from the mux. Here’s is a simple text diagram from the SSHFS-MUX website that illustrates how this works:

host1: host2: host3: directory1 directory2 mountpoint / + \ ==> / \ data1 data2 data1 data2

In this case, I’m combining directory1 from host1 with directory2 from host2 into a single mountpoint on host3.

Although SSHFS-MUX (SSHFSM) might generate some yawns, “muxing” directories is actually very powerful. To illustrate this power, I’ll look at a situation in which I’m a user on a system with my /home/layton directory on my local system (host = desktop). I also access an HPC system that has its own /home/jlayton directory (the login node is login1). On the HPC system I only keep some basic source and job scripts in /home/jlayton. To run my job, I have a third filesystem, /project/laytonj, where I store my input and output data. I can combine the HPC filesystems on my desktop into a single directory using SSHFS-MUX:

login1: login1: desktop: directory1 directory2 mountpoint / + \ ==> / \ data1 data2 data1 data2

This allows me to see both my “home” and “project” directories as a single mountpoint on my desktop, making my life much easier for organizing data.

An alternative scenario revolves around load balancing. Recall that SSHFS does encryption and decryption, which puts more load on the CPUs on the host and client systems. However, if I have SSH access to two systems that mount the same directory structure, I can mount different directories from different servers, effectively load balancing between the servers. For example, say I want to mount data contained in a Lustre server on my desktop. A directory /home/layton/PROJECT has a number of subdirectories (named in caps). Specifically, I have two major subdirectores, /home/layton/PROJECT/FOO and /home/layton/PROJECT/BAR, that I want to mount on my desktop.

/home/layton/ | /home/layton/PROJECT / \ FOO BAR

I could use SSHFS and mount /home/layton/PROJECT onto my desktop from a single system, gateway1, a Lustre client, but then performance is limited by that one server:

gateway1: desktop: /home/layton/PROJECT ==> /home/layton/REMOTE_PROJECT / \ / \ FOO BAR FOO BAR

On the other hand, using SSHFSM, I could use two different servers, gateway1 and gateway2, to mount FOO and BAR separately:

gateway1: gateway2: desktop: /home/layton/PROJECT/FOO + /home/layton/PROJECT/BAR ==> /home/layton/REMOTE_PROJECT / \ FOO BAR

The advantage of this approach is that the load of accessing data in FOO and BAR is spread across two servers. This can ease the encryption and decryption burden across two servers instead of just one.

SSHFSM originated from research at The University of Tokyo by Dun Nan who is now a Postdoctoral Scholar at the Universiy of Chicago. It is based on a version of SSHFS from around the 2010 time frame. This corresponds to about version 2.2 of SSHFS, which is from 2008. SSHFS is now up to version 2.5, which was released on January 14, 2014; however, testing I’ve done hasn’t revealed any differences, although I’m sure there are some.

Installing SSHFS-MUX on Linux

Installing SSHFSM is as easy as installing SSHFS. If you look at my article on SSHFS, you can see the steps I used. In the interest of completeness, the basic steps for building SSHFSM are

$ ./configure --prefix=/usr $ make $ make install

where the last step is performed as root. Be sure to read the SSHFSM page for package prerequisites. Notice that I installed SSHFSM into /usr. The SSHFSM binary is installed in /usr/bin, which is in the standard path. The binary is name sshfsm, so SSHFSM can be installed alongside SSHFS.

To make sure everything is installed correctly, simply try the command sshfsm -V:

[laytonjb@test8 ~]$ sshfsm -V SSHFSM version 1.3 FUSE library version: 2.8.3 fusermount version: 2.8.3 using FUSE kernel interface version 7.12

Because I’m interested in using SSHFS-MUX just as I would SSHFS, I’m not going to test the MUX features of SSHFS-MUX.

SSHFS-MUX Performance on Linux

As with SSHFS, I want to test the performance of SSHFSM using some tuning options, so I’ll follow the same testing pattern I did with SSHFS. I don’t expect too many differences, but without testing, I won’t know for sure. For the sake of completeness, I’m repeating some of the details from the original SSHFS article.

On my desktop I have a Samsung 840 SSD attached via a SATA II connection (6Gbps) and mounted as /data. It is formatted with ext4 using the defaults (I’m running CentOS 6.5). I will use this as the filesystem for testing. The desktop system has the following basic characteristics:

- Intel Core i7-4770K processor (four cores, eight with HyperThreading, running at 3.5GHz)

- 32GB of memory (DDR3-1600)

- GigE NIC

- Simple GigE switch

- CentOS 6.5 (updates current as of March 29, 2014)

The test system that mounts the desktop storage has the following characteristics:

- CentOS 6.5 (updates current as of March 29, 2014)

- GigaByte MAA78GM-US2H motherboard

- AMD Phenom II X4 920 CPU (four cores)

- 8GB of memory (DDR2-800)

- GigE NIC

As before, I’ll be using IOzone to run three tests on NFS, SSHFS, and SSHFSM: (1) sequential writes and re-writes, (2) sequential reads and re-reads, and (3) random read and write IOPS (4KB record sizes).

The testing starts with baseline results using the defaults that come with the Linux distributions, then I’ll test tuned configurations of two types: SSHFSM mount options (same as SSHFS for the most part) and TCP tuning on both the server (my desktop) and the client (the test system). The second type of tuning affects the NFS results as well.

For these tests, I use the defaults in CentOS 6.5 for SSHFS, SSHFSM, and TCP. In the case of NFS, I export /data/laytonjb from my desktop (192.168.1.4), which takes on the role of a server, to my test machine (192.168.1.250), which takes on the role of the client. I then mount the filesystem as /mnt/data on the test system. In the case of SSHFS and SSHFSM I mount /data/laytonjb as /home/laytonjb/DATA.

The first test I ran was a sequential write test using IOzone using the same command line for both NFS and SSHFS:

./iozone -i 0 -r 64k -s 16G -w -f iozone.tmp > iozone_16G_w.out

The command only runs the sequential write and re-write tests (-i 0) using 64KB record sizes (-r 64k) with a 16GB file (-s 16G). I also kept the file for the read tests (-w).

The sequential read tests are very similar to the write tests. The IOzone command line is:

./iozone -i 1 -r 64k -s 16G -f iozone.tmp > iozone_16G_r.out

The command only runs the sequential read and re-read tests (-i 1) using the same record size and file size as the sequential write tests.

The random IOPS testing was also done using IOzone. The command line is pretty simple as well:

./iozone -i 2 -r 4k -s 8G -w -O -f iozone.tmp > iozone_8G_random_iops.out

The option -i 2 runs random read and write tests. I chose to use a record size of 4KB (typical for IOPS testing) and an 8GB file. (It took a long time to complete the tests using a 16GB file.) I also output the results in operations/second (-O) to get the IOPS results easily in the output file.

Normally, if I were running real benchmarks, I would run the tests several times and report the average and standard deviation. However, I’m not running the tests for benchmarking purposes; rather, I want a performance comparison between SSHFSM and NFS. However, I did run the tests a few times to get a feel for variation in the results to make sure it wasn’t too large.

Five sets of test cases were run.

1. Baseline Test Set

The first set are baseline runs that used the CentOS 6.5 defaults for NFS, SSHFS, and SSHFSM.

2. SSHFS-MUX Optimizations (OPT1)

In the second set of tests, I changed some SSHFSM mount options to improve performance. These results are labeled “OPT1.” The specific mount options are:

- -o cache=yes

- -o kernel_cache

- -o compression=no

- -o large_read

- -o Ciphers=arcfour

- -o big_writes

- -o auto_cache

The first option turns caching on; the second option allows the kernel to cache, as well. The third option turns compression off, and the fourth option allows large reads, which might help with read performance.

The fifth option switches the encryption algorithm to arcfour, which is about the fastest encryption algorithm, with performance very close to no encryption (cipher). Although it doesn’t provide the best encryption, it is fast, and I’m looking for the fastest possible performance.

The sixth option, -o big_writes, enables writes larger than 4KB. The seventh option, -o auto_cache, enables caching based on modification times.

3. SSHFS-MUX and TCP optimizations (OPT2)

The third set of tests are designed to improve performance even further using some TCP tuning options. These tests build on the second set of tests, supplying both SSHFSM and TCP tuning options. These results are labeled “OPT2.”

The TCP optimizations used primary affect the TCP wmem (write buffer size) and rmem (read buffer size) values along with some maximum and default values. These tuning options were taken from an article on OpenSSH tuning. The parameters were put into /etc/sysctl.conf. Additionally, the MTU for both the client and server were increased, but the client could only be set to a maximum of 6128. The full list of changes include:

- MTU increase

- 9000 on “server” (Intel node)

- 6128 on “client” (AMD node)

- sysctl.conf values:

- net.ipv4.tcp_rmem = 4096 87380 8388608

- net.ipv4.tcp_wmem = 4096 87380 8388608

- net.core.rmem.max = 8388608

- net.core.wmem.max = 8388608

- net.core.netdev.max_backlog = 5000

- net.ipv4.tcp_window_scaling = 1

I cannot vouch for each and every network setting, but adjusting the wmem and rmem values is a very common method for improving NFS performance.

4. SSHFS-MUX and TCP Optimizations – Increased Encryption (OPT3)

Up to this point in the testing I changed the encryption to a cipher that had a minimal effect on performance. But what happens if I use the default encryption (aes-128)? Does performance take a nose dive, or does it stay fairly competitive with NFS? The fourth set of tests for SSHFSM start with the options for SSHFSM OPT2 but remove the option -o Ciphers=arcfour from the SSHFSM mount command. The encryption then uses the default encryption, which I believe is aes-128. The performance results are labeled “SSHFSM OPT3.”

5. SSHFS-MUX and TCP Optimizations – Compression (OPT4)

Depending on your situation, SSHFSM might need stronger encryption than arcfour, but you would still like to improve performance. Does SSHFSM have any options that can help? In the fifth set of tests, I started with the SSHFSM OPT3 results and I turned compression on, hoping it might improve performance – or at least illustrate the effect of compression on performance. The results with compression turned on are labeled “SSHFSM OPT3.”

Test Results

Table 1 lists the IOzone results for NFS and SSHFS from the previous article with the results for the five SSHFSM test sets:

- Baseline: Use all system and SSHFS-MUX defaults

- OPT1: SSHFS-MUX mount option tuning

- OPT2: OPT1 with TCP tuning

- OPT3: OPT2 with stronger encryption (aes-128)

- OPT4: OPT3 with compression turned on

Table 1: Results of Five Test Sets for All Six Tests

| Test | Sequential Write (MBps) | Sequential Re-write (MBps) | Sequential Read (MBps) | Sequential Re-read (MBps) | Random Write IOPS | Random Read IOPS |

| NFS baseline | 83.996 | 87.391 | 114.301 | 114.314 | 13,554 | 5,095 |

| NFS TCP optimizations | 96.334 | 92.349 | 120.010 | 120.189 | 13,345 | 5,278 |

| SSHFS baseline | 43.182 | 49.459 | 54.757 | 63.667 | 12,574 | 2,859 |

| SSHFSM baseline | 43.207 | 52.068 | 54.746 | 63.437 | 12,848 | 2,892 |

| SSHFS OPT1 | 81.646 | 85.747 | 112.987 | 131.187 | 21,533 | 3,033 |

| SSHFSM OPT1 | 85.904 | 88.210 | 112.963 | 130.861 | 20,447 | 3,315 |

| SSHFS OPT2 | 111.864 | 119.196 | 119.153 | 136.081 | 29,802 | 3,237 |

| SSHFSM OPT2 | 113.567 | 120.037 | 119.072 | 136.104 | 29,802 | 3,038 |

| SSHFS OPT3 | 51.325 | 56.328 | 58.231 | 67.588 | 14,100 | 2,860 |

| SSHFSM OPT3 | 54.595 | 61.111 | 67.107 | 67.379 | 13,958 | 2,670 |

| SSHFS OPT4 | 78.600 | 79.589 | 158.020 | 158.925 | 19,326 | 2,892 |

| SSHFSM OPT4 | 83.120 | 86.599 | 156.035 | 155.923 | 19,439 | 2,767 |

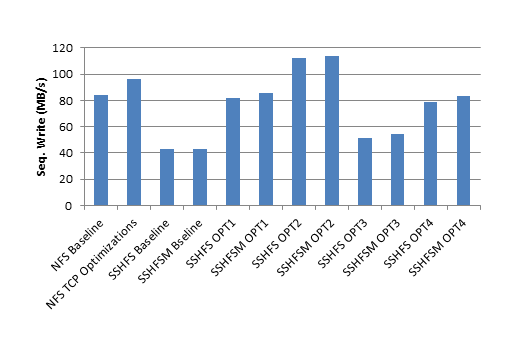

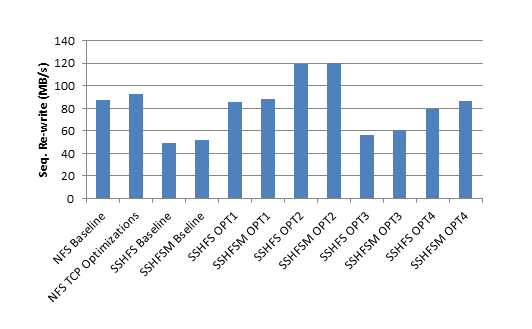

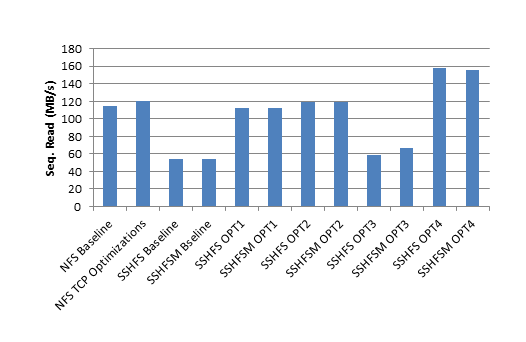

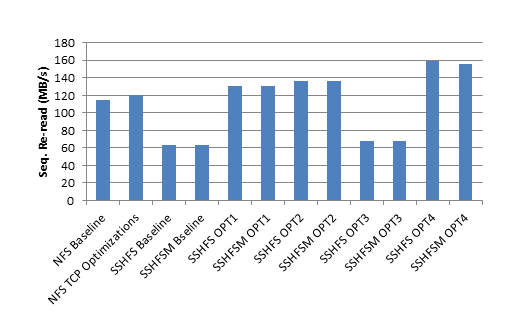

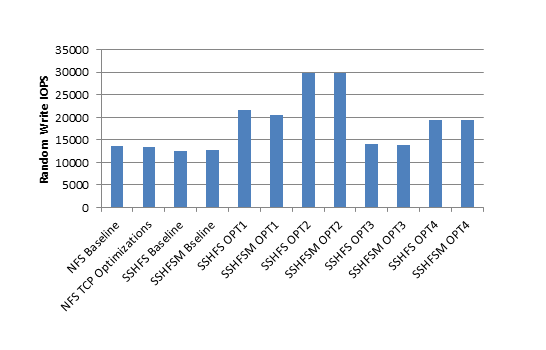

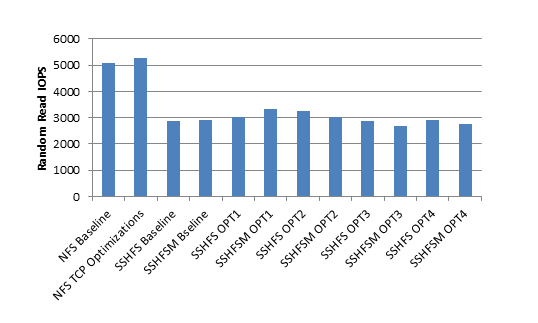

To help visualize the data, the six bar charts in Figures 1-6 plot the results for all five test sets, one for each test.

Figure 1: Sequential Write Test: Plot of Baseline, OPT1, OPT2, OPT3, and OPT4 IOzone results for NFS, SSHFS, and SSHFSM.

Figure 1: Sequential Write Test: Plot of Baseline, OPT1, OPT2, OPT3, and OPT4 IOzone results for NFS, SSHFS, and SSHFSM.

Figure 2:Sequential Re-write Test: Plot of Baseline, OPT1, OPT2, OPT3, and OPT4 IOzone results for NFS, SSHFS, and SSHFSM.

Figure 2:Sequential Re-write Test: Plot of Baseline, OPT1, OPT2, OPT3, and OPT4 IOzone results for NFS, SSHFS, and SSHFSM.

Figure 3: Sequential Read Test: Plot of Baseline, OPT1, OPT2, OPT3, and OPT4 IOzone results for NFS, SSHFS, and SSHFSM.

Figure 3: Sequential Read Test: Plot of Baseline, OPT1, OPT2, OPT3, and OPT4 IOzone results for NFS, SSHFS, and SSHFSM.

Figure 4: Sequential Re-read Test: Plot of Baseline, OPT1, OPT2, OPT3, and OPT4 IOzone results for NFS, SSHFS, and SSHFSM.

Figure 4: Sequential Re-read Test: Plot of Baseline, OPT1, OPT2, OPT3, and OPT4 IOzone results for NFS, SSHFS, and SSHFSM.

Figure 5: Random Write IOPS Test: Plot of Baseline, OPT1, OPT2, OPT3, and OPT4 IOzone results for NFS, SSHFS, and SSHFSM.

Figure 5: Random Write IOPS Test: Plot of Baseline, OPT1, OPT2, OPT3, and OPT4 IOzone results for NFS, SSHFS, and SSHFSM.

Figure 6: Random Read IOPS Test: Plot of Baseline, OPT1, OPT2, OPT3, and OPT4 IOzone results for NFS, SSHFS, and SSHFSM.

Figure 6: Random Read IOPS Test: Plot of Baseline, OPT1, OPT2, OPT3, and OPT4 IOzone results for NFS, SSHFS, and SSHFSM.

Comparing the performance of SSHFS and SSHFSM is rather interesting. In general, the performance is quite close except in a couple of instances. For example in the sequential write tests, SSHFSM is sometimes up to 5% faster than SSHFS. SSHFSM in the OPT4 results for the sequential re-write test is almost 9% faster than SSHFS. For the sequential read, re-read, and random read IOPS, SSHFS is sometimes slightly faster than SSHFSM. However, this comparison might not be that useful because the “spread” of the performance results is unknown, and one can’t conclusively say one is faster than the others.

Comparing SSHFS and SSHFSM performance to NFS is perhaps a little more useful from a qualitative standpoint. When all the SSHFMS and TCP optimizations are used, SSHFS and SSHFSM has better write performance (sequential write and re-write, and random write IOPS). Even if a stronger encryption is used, which requires more computational capability, SSHFS and SSHFSM can be just as good or better than NFS for the write tests. However, read performance for SSHFS and SSHFSM is worse or only close to NFS performance until compression is turned on (compression turned on results are OPT4). One remarkable result is that SSHFS and SSHFSM performance for the random read IOPS test is never better than about 60% that of NFS.

Summary

SSHFS has great potential as a shared filesystem. It encrypts data in flight, it allows users to control what filesystems they mount, and it only requires an open SSH port (port 22). With some tuning, you can get SSHFS performance on the same level as NFS, except for the random read IOPS performance. However, the price you pay is data encryption or decryption on both the client and server.

In the pursuit of even more performance, I tried SSHFS-MUX. SSHFSM is derived from SSHFS and has been extended to allow several directories to be mounted in a single mountpoint. I didn’t test this feature but I did test the performance of SSHFS-MUX relative to SSHFS. Although the tests I ran here are not as extensive as those I would perform for benchmarking, casual testing allowed me to gauge general performance differences. In some cases SSHFS-MUX was a little faster than SSHFS, but it was fairly small, only about 5% for some cases.

For anyone considering using SSHFS, SSHFS-MUX, with its ability to combine directories from different servers into a single mountpoint, is a definite option.